Two and a half years ago, MIT entered into a research agreement with startup company Commonwealth Fusion Systems to develop a next-generation fusion research experiment, called SPARC, as a precursor to a practical, emissions-free power plant.

Now, after many months of intensive research and engineering work, the researchers charged with defining and refining the physics behind the ambitious tokamak design have published a series of papers summarizing the progress they have made and outlining the key research questions SPARC will enable.

Overall, says Martin Greenwald, deputy director of MIT’s Plasma Science and Fusion Center and one of the project’s lead scientists, the work is progressing smoothly and on track. This series of papers provides a high level of confidence in the plasma physics and the performance predictions for SPARC, he says. No unexpected impediments or surprises have shown up, and the remaining challenges appear to be manageable. This sets a solid basis for the device’s operation once constructed, according to Greenwald.

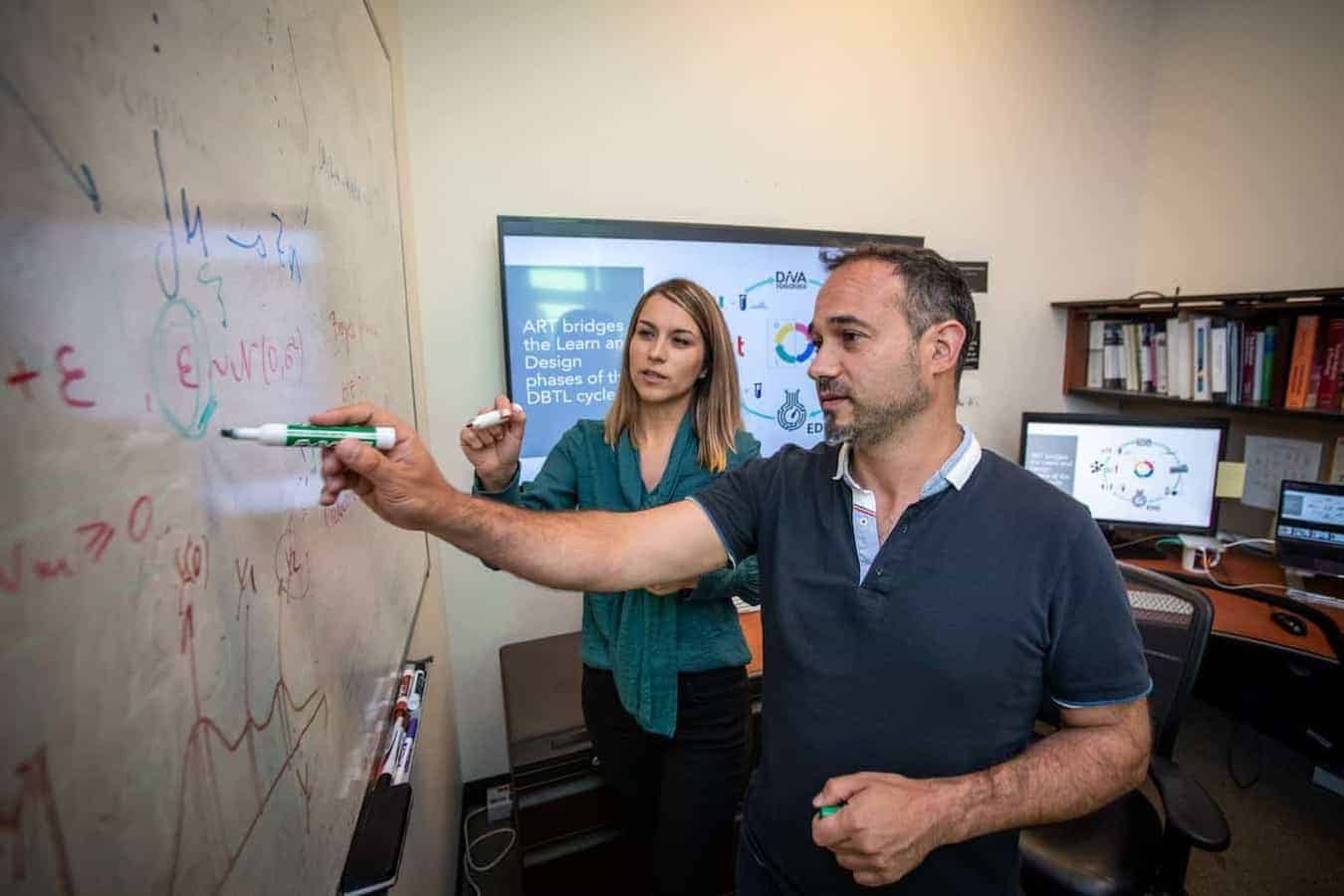

Greenwald wrote the introduction for a set of seven research papers authored by 47 researchers from 12 institutions and published today in a special issue of the Journal of Plasma Physics. Together, the papers outline the theoretical and empirical physics basis for the new fusion system, which the consortium expects to start building next year.

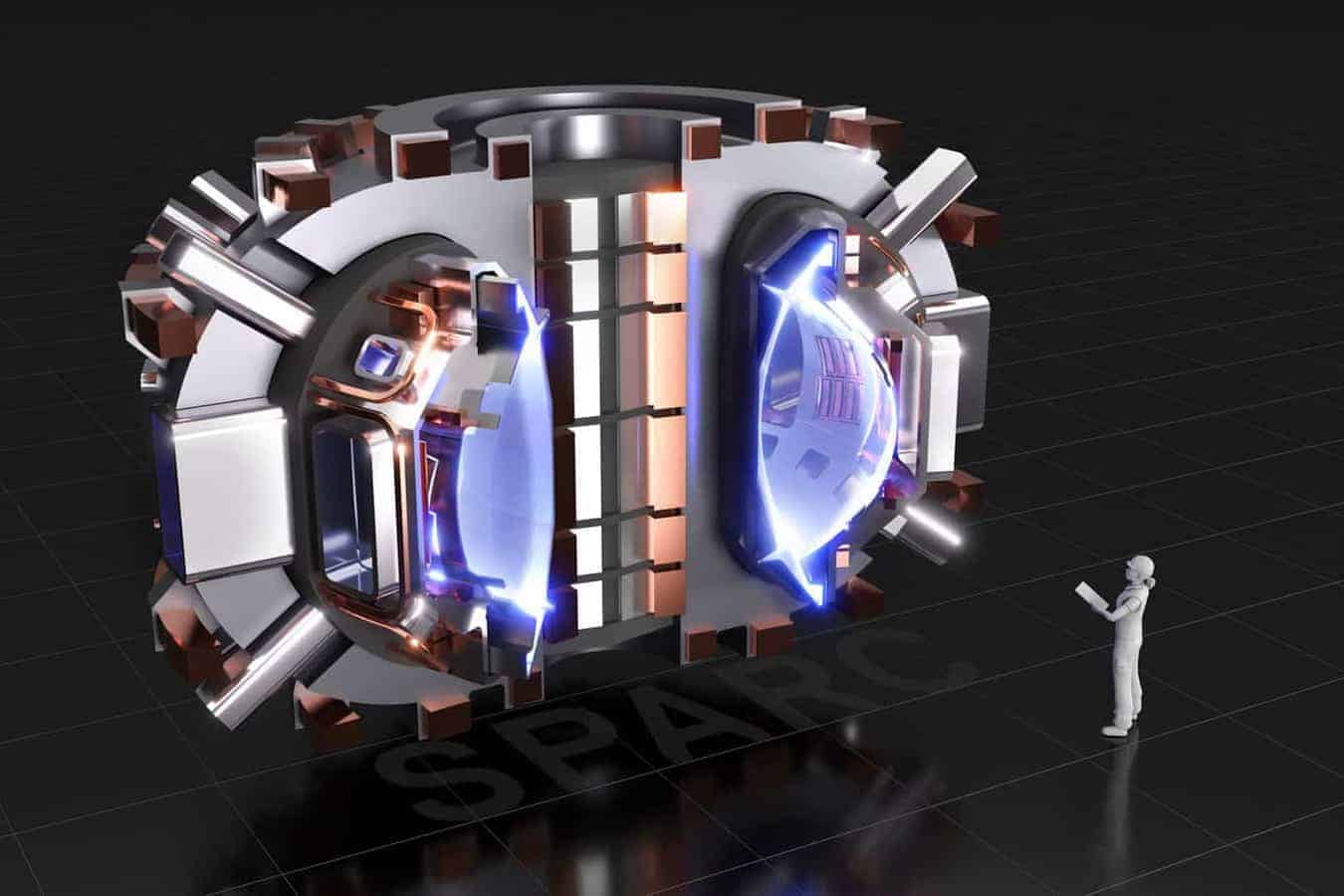

SPARC is planned to be the first experimental device ever to achieve a “burning plasma” — that is, a self-sustaining fusion reaction in which different isotopes of the element hydrogen fuse together to form helium, without the need for any further input of energy. Studying the behavior of this burning plasma — something never before seen on Earth in a controlled fashion — is seen as crucial information for developing the next step, a working prototype of a practical, power-generating power plant.

Such fusion power plants might significantly reduce greenhouse gas emissions from the power-generation sector, one of the major sources of these emissions globally. The MIT and CFS project is one of the largest privately funded research and development projects ever undertaken in the fusion field.

“The MIT group is pursuing a very compelling approach to fusion energy.” says Chris Hegna, a professor of engineering physics at the University of Wisconsin at Madison, who was not connected to this work. “They realized the emergence of high-temperature superconducting technology enables a high magnetic field approach to producing net energy gain from a magnetic confinement system. This work is a potential game-changer for the international fusion program.”

The SPARC design, though about the twice the size as MIT’s now-retired Alcator C-Mod experiment and similar to several other research fusion machines currently in operation, would be far more powerful, achieving fusion performance comparable to that expected in the much larger ITER tokamak being built in France by an international consortium. The high power in a small size is made possible by advances in superconducting magnets that allow for a much stronger magnetic field to confine the hot plasma.

The SPARC project was launched in early 2018, and work on its first stage, the development of the superconducting magnets that would allow smaller fusion systems to be built, has been proceeding apace. The new set of papers represents the first time that the underlying physics basis for the SPARC machine has been outlined in detail in peer-reviewed publications. The seven papers explore the specific areas of the physics that had to be further refined, and that still require ongoing research to pin down the final elements of the machine design and the operating procedures and tests that will be involved as work progresses toward the power plant.

The papers also describe the use of calculations and simulation tools for the design of SPARC, which have been tested against many experiments around the world. The authors used cutting-edge simulations, run on powerful supercomputers, that have been developed to aid the design of ITER. The large multi-institutional team of researchers represented in the new set of papers aimed to bring the best consensus tools to the SPARC machine design to increase confidence it will achieve its mission.

The analysis done so far shows that the planned fusion energy output of the SPARC tokamak should be able to meet the design specifications with a comfortable margin to spare. It is designed to achieve a Q factor — a key parameter denoting the efficiency of a fusion plasma — of at least 2, essentially meaning that twice as much fusion energy is produced as the amount of energy pumped in to generate the reaction. That would be the first time a fusion plasma of any kind has produced more energy than it consumed.

The calculations at this point show that SPARC could actually achieve a Q ratio of 10 or more, according to the new papers. While Greenwald cautions that the team wants to be careful not to overpromise, and much work remains, the results so far indicate that the project will at least achieve its goals, and specifically will meet its key objective of producing a burning plasma, wherein the self-heating dominates the energy balance.

Limitations imposed by the Covid-19 pandemic slowed progress a bit, but not much, he says, and the researchers are back in the labs under new operating guidelines.

Overall, “we’re still aiming for a start of construction in roughly June of ’21,” Greenwald says. “The physics effort is well-integrated with the engineering design. What we’re trying to do is put the project on the firmest possible physics basis, so that we’re confident about how it’s going to perform, and then to provide guidance and answer questions for the engineering design as it proceeds.”

Many of the fine details are still being worked out on the machine design, covering the best ways of getting energy and fuel into the device, getting the power out, dealing with any sudden thermal or power transients, and how and where to measure key parameters in order to monitor the machine’s operation.

So far, there have been only minor changes to the overall design. The diameter of the tokamak has been increased by about 12 percent, but little else has changed, Greenwald says. “There’s always the question of a little more of this, a little less of that, and there’s lots of things that weigh into that, engineering issues, mechanical stresses, thermal stresses, and there’s also the physics — how do you affect the performance of the machine?”

The publication of this special issue of the journal, he says, “represents a summary, a snapshot of the physics basis as it stands today.” Though members of the team have discussed many aspects of it at physics meetings, “this is our first opportunity to tell our story, get it reviewed, get the stamp of approval, and put it out into the community.”

Greenwald says there is still much to be learned about the physics of burning plasmas, and once this machine is up and running, key information can be gained that will help pave the way to commercial, power-producing fusion devices, whose fuel — the hydrogen isotopes deuterium and tritium — can be made available in virtually limitless supplies.

The details of the burning plasma “are really novel and important,” he says. “The big mountain we have to get over is to understand this self-heated state of a plasma.”

“The analysis presented in these papers will provide the world-wide fusion community with an opportunity to better understand the physics basis of the SPARC device and gauge for itself the remaining challenges that need to be resolved,” says George Tynan, professor of mechanical and aerospace engineering at the University of California at San Diego, who was not connected to this work. “Their publication marks an important milestone on the road to the study of burning plasmas and the first demonstration of net energy production from controlled fusion, and I applaud the authors for putting this work out for all to see.”

Overall, Greenwald says, the work that has gone into the analysis presented in this package of papers “helps to validate our confidence that we will achieve the mission. We haven’t run into anything where we say, ‘oh, this is predicting that we won’t get to where we want.” In short, he says, “one of the conclusions is that things are still looking on-track. We believe it’s going to work.”

from ScienceBlog.com https://ift.tt/34b3Jlr