Quantum information ‘teleported’ at Fermilab, Caltech represents step toward quantum internet

Aviable quantum internet—a network in which information stored in qubits is shared over long distances through entanglement—would transform the fields of data storage, precision sensing and computing, ushering in a new era of communication.

This month, scientists at Fermi National Accelerator Laboratory—a U.S. Department of Energy national laboratory affiliated with the University of Chicago—along with partners at five institutions took a significant step in the direction of realizing a quantum internet.

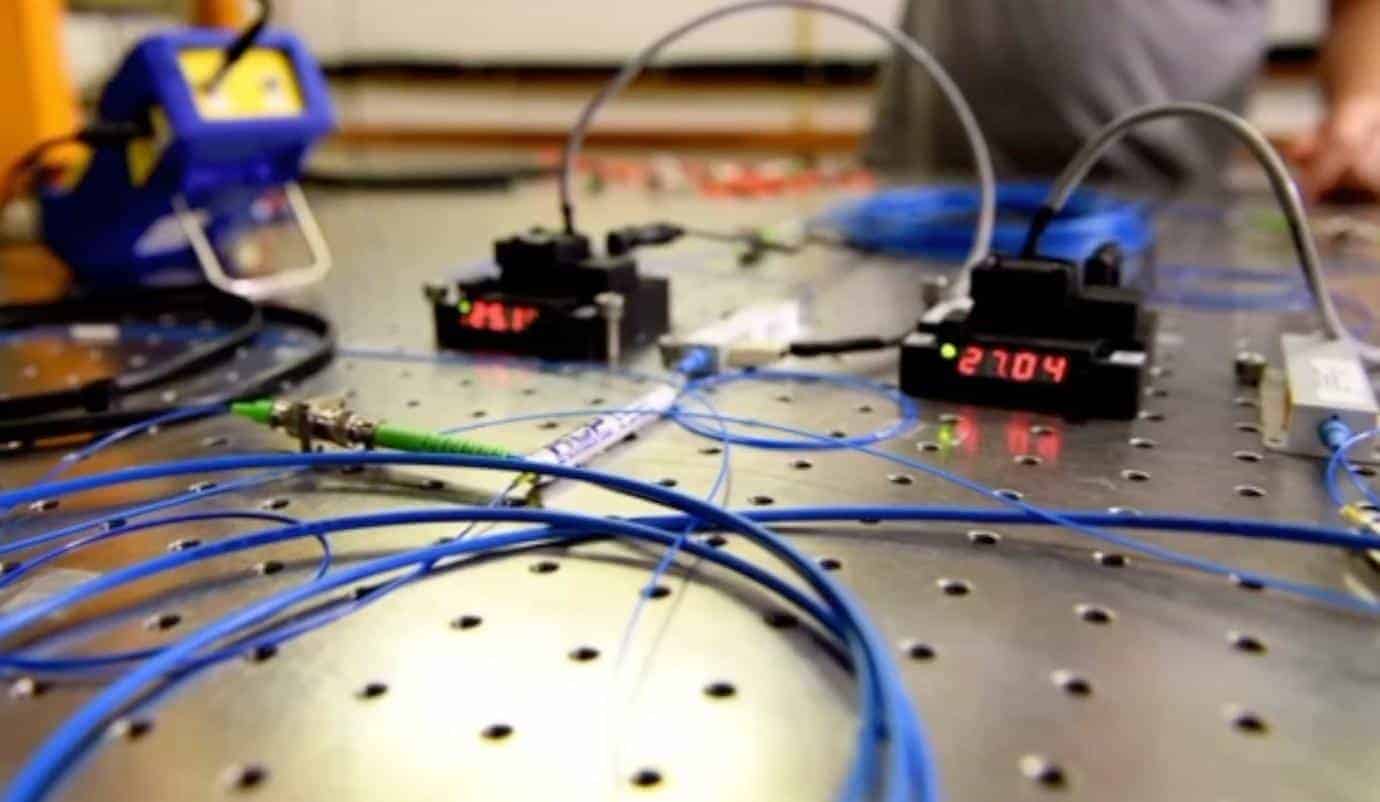

In a paper published in PRX Quantum, the team presents for the first time a demonstration of a sustained, long-distance teleportation of qubits made of photons (particles of light) with fidelity greater than 90%.

The qubits were teleported over a fiber-optic network 27 miles (44 kilometers) long using state-of-the-art single-photon detectors, as well as off-the-shelf equipment.

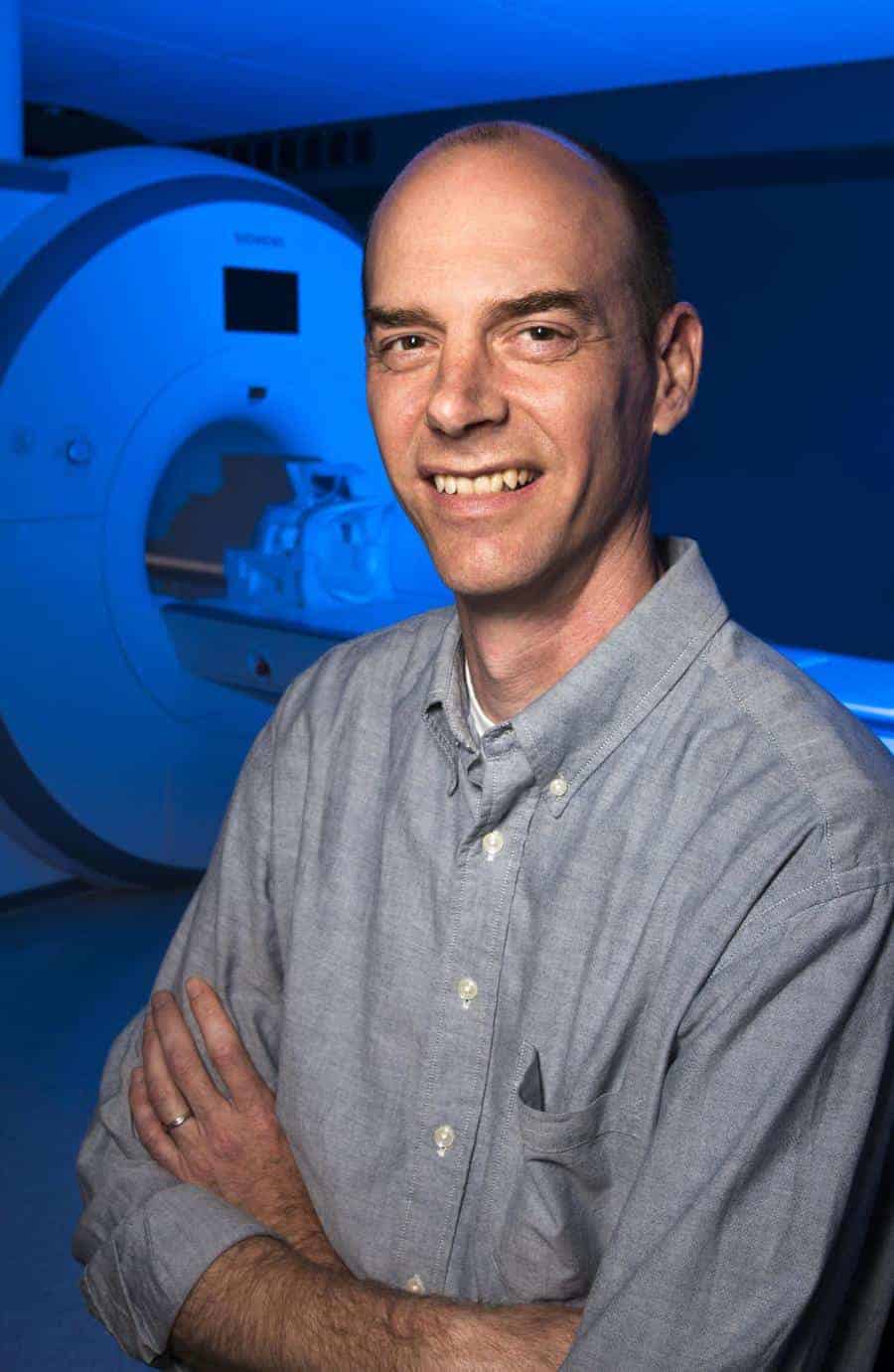

“We’re thrilled by these results,” said Fermilab scientist Panagiotis Spentzouris, head of the Fermilab quantum science program and one of the paper’s co-authors. “This is a key achievement on the way to building a technology that will redefine how we conduct global communication.”

The achievement comes just a few months after the U.S. Department of Energy unveiled its blueprint for a national quantum internet at a press conference at the University of Chicago.

Linking particles

Quantum teleportation is a “disembodied” transfer of quantum states from one location to another. The quantum teleportation of a qubit is achieved using quantum entanglement, in which two or more particles are inextricably linked to each other. If an entangled pair of particles is shared between two separate locations, no matter the distance between them, the encoded information is teleported.

The joint team—researchers at Fermilab, AT&T, Caltech, Harvard University, NASA Jet Propulsion Laboratory and University of Calgary—successfully teleported qubits on two systems: the Caltech Quantum Network and the Fermilab Quantum Network. The systems were designed, built, commissioned and deployed by Caltech’s public-private research program on Intelligent Quantum Networks and Technologies, or IN-Q-NET.

“With this demonstration we’re beginning to lay the foundation for the construction of a Chicago-area metropolitan quantum network.”

“We are very proud to have achieved this milestone on sustainable, high-performing and scalable quantum teleportation systems,” said Maria Spiropulu, the Shang-Yi Ch’en professor of physics at Caltech and director of the IN-Q-NET research program. “The results will be further improved with system upgrades we are expecting to complete by the second quarter of 2021.”

Both the Caltech and Fermilab networks, which feature near-autonomous data processing, are compatible both with existing telecommunication infrastructure and with emerging quantum processing and storage devices. Researchers are using them to improve the fidelity and rate of entanglement distribution, with an emphasis on complex quantum communication protocols and fundamental science.

“With this demonstration we’re beginning to lay the foundation for the construction of a Chicago-area metropolitan quantum network,” Spentzouris said.

The Chicagoland network, called the Illinois Express Quantum Network, is being designed by Fermilab in collaboration with Argonne National Laboratory, Caltech, Northwestern University and industry partners.

“The feat is a testament to success of collaboration across disciplines and institutions, which drives so much of what we accomplish in science,” said Fermilab Deputy Director of Research Joe Lykken. “I commend the IN-Q-NET team and our partners in academia and industry on this first-of-its-kind achievement in quantum teleportation.

Citation: “Teleportation Systems Towards a Quantum Internet.” Valivarthi et al., PRX Quantum, Dec. 4, 2020, DOI: 10.1103/PRXQuantum.1.020317

Funding: U.S. Department of Energy Office of Science QuantISED program

from ScienceBlog.com https://ift.tt/3rIGtWT