In the first blog in this series, we explored engagement and impact readiness for the Research Excellence Framework (REF) 2029. Here, we turn to the second element of assessment: Contribution to Knowledge & Understanding, and what it takes to approach it with evidence and confidence.

Contribution to Knowledge & Understanding (CKU) sits at the core of REF assessment. For institutions preparing submissions, the task is not simply to present strong individual outputs, but to show how research collectively advances knowledge within and across disciplines and how that work was enabled and supported within the institution’s research environment.

As REF 2029 approaches, most institutions will find that they are not short of high-quality research. The task they now have is to present that research as a coherent, representative, and defensible account of contribution, traceable back to the people, grants, and infrastructure that enabled it.

That distinction matters more than it might first appear.

Beyond completeness

It is tempting to frame CKU readiness as a data completeness problem. If all outputs are captured, the argument goes, selection can proceed with confidence.

But completeness is not the same as representation.

REF panels assess whether a submitted body of work reflects the range and diversity of a unit’s research activity, not simply whether a record exists for every output. A dataset can be complete and still produce a submission that is narrow, uneven, or poorly contextualised.

“The challenge for universities is not simply about capturing and submitting quality research outputs to REF, it is about demonstrating the full diversity and breadth of the research outputs. In choosing which outputs to submit, universities are expected to demonstrate the diverse range of staff contributing to the outputs; the diverse range of disciplines, research methods and output types whilst also ensuring that contributions from inter- and multi-disciplinary collaborations are represented,” says Natalie Dallat, Head of Research Performance, Ulster University.

This distinction has practical implications. In a decoupled framework, where submitted outputs do not need to be linked to specific individuals, institutions still need to demonstrate a substantive connection between research and the environment that enabled it. That requires not just complete records, but well-contextualised ones.

Three areas of risk are worth examining in turn.

Output visibility: what institutions know and what they can prove

In practice, most significant outputs are already known to institutions. Academic workflows, open access deposit requirements, and internal review processes mean that the majority of relevant publications are captured somewhere.

The more common challenge is not absence but unevenness, gaps in coverage that accumulate over time through staff mobility, inconsistent author affiliations, publications linked to grants but not captured locally, and interdisciplinary outputs that fall between Units of Assessment (UoA).

These are rarely major gaps in institutional systems. But in aggregate, they can affect the completeness and credibility of a submission, particularly in disciplines where research activity may be systematically underrepresented relative to its actual volume.

Addressing this requires two complementary layers. Research information systems such as Symplectic Elements provide structured output capture, validation workflows, and linkage between researchers, publications and grants, creating the audit trail that REF governance demands. An independent, interconnected data layer such as Dimensions then enables cross-checking: surfacing missing outputs, highlighting metadata discrepancies, and providing a broader view of publication activity beyond local records.

“What Dimensions allows institutions to do is essentially hold a mirror up to their own systems. Not to replace internal records, but to ask: is what we’re seeing internally representative of what’s actually out there? For some disciplines or research groups, that comparison can be revealing,” explains Ann Campbell, Director Research Impact & Comparative Analytics at Digital Science.

Together, structured capture and independent validation strengthen confidence in completeness before output selection begins and provide a more defensible evidence base for the decisions that follow.

Understanding performance within fields

Once institutions have confidence in the completeness of their records, a second challenge emerges: interpreting performance in a way that is fair and defensible across disciplines.

Raw citation counts rarely tell the full story. Citation norms vary significantly across fields; what constitutes a well-cited output in a fast-moving biomedical discipline looks very different from the equivalent in history or architecture. A paper with 20 citations might be considered relatively modest in one field, but well above average in another.

While output selection is typically led by discipline experts within UoA, decisions are often informed by broader portfolios and mixed indicators. Without appropriate field-level contextualisation, there may be tendency to overvalue some outputs that align with readily interpretable patterns of performance (i.e., citation counts) and undervalue others particularly where interdisciplinary research is involved. This can have consequences both for selection and for the narrative presented to panels.

The scale of this variation is visible in the data. Across UK institutions, raw citation counts for outputs in Units of Assessment such as Clinical Medicine or Physics far exceed those in disciplines like History or Art & Design, and yet when performance is measured relative to field norms, the picture shifts substantially. Units that appear modest on raw citations often demonstrate strong or above-average relative contribution when field-normalised indicators are applied. For institutions making selection decisions across multiple UoAs, this difference is not academic: it directly affects which outputs are recognised as genuinely competitive, and which risk being undervalued simply because they sit in lower-citation disciplines.

* Average citation counts and field-normalised citation performance across REF Units of Assessment (2014–2021, UK Institutions, articles only using Dimensions UoA Classification)

Field-normalised indicators and disciplinary benchmarking support a more accurate and defensible reading of performance. Dimensions enables field-normalised citation analysis, benchmarking against peer institutions, collaboration pattern analysis, and trend tracking across time.

Peer review remains central to CKU assessment. But contextual data helps institutions approach that peer review better prepared with a clearer sense of where their research sits within its field, and a stronger basis for the interpretive narrative they are expected to provide.

From individual outputs to coherent thematic narratives

CKU submissions are strongest when outputs form a coherent intellectual narrative. Panels respond to thematic depth and sustained advancement of knowledge not isolated high-performing items, however well-cited they may be.

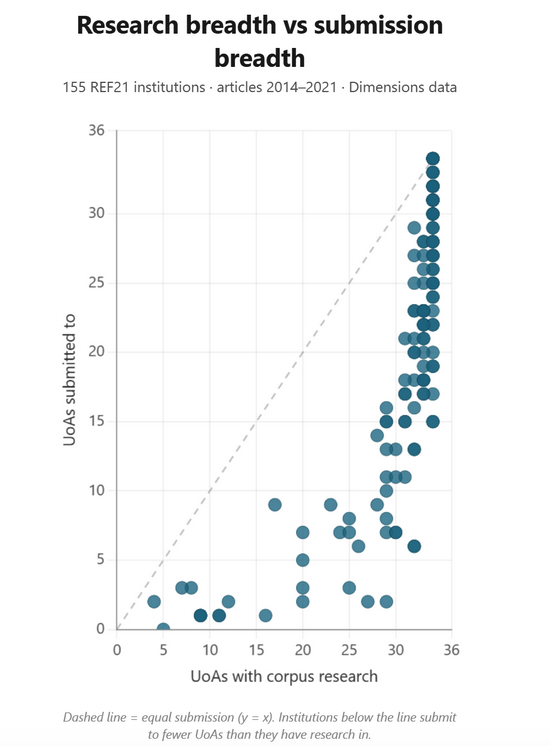

That makes output selection a genuinely strategic exercise and the scale of the choices involved is considerable. Analysis of REF21 submission patterns shows that the typical institution produced eligible research across 33 of the 34 UoA, but submitted to just 20. In nearly one in five cases where an institution had a meaningful body of research within a UoA, that UoA received no submission at all. Even within the UoAs that institutions chose to submit, the median coverage rate was under 7%.

The submitted profile, in other words, represents a deliberately selective slice of a much broader underlying research base. That selectivity is appropriate as REF rewards quality over volume, and strategic narrowing is both permitted and expected. But it means the submitted body of work must tell a coherent story about where an institution’s research genuinely lies. Getting that story right requires a clear view of the full landscape: understanding where depth is concentrated, where disciplines connect, and where gaps might undermine the coherence of what is presented to panels.

Thematic clustering and citation network analysis can help identify areas of concentrated strength and the interdisciplinary bridges that connect them. These analytical approaches surface patterns that may not be visible when outputs are reviewed individually, and support the kind of coherent story that distinguishes a strong CKU submission.

That coherent story, however, increasingly needs to account for more than publications alone. As REF increasingly recognises diverse outputs, datasets, code, preprints, and other research artefacts alongside traditional publications, institutions also need infrastructure that makes that breadth visible and accessible.

The evidence from REF21 illustrates how far there is still to go: of the 4,000 non-traditional outputs submitted, almost three quarters had unknown or unresolvable locations, and only 244 had DOIs. REF21 Main Panel D assessors noted the wide variety, inconsistent quality and uneven preservation of practice-based outputs with many hosted on fragile, short-lived platforms that were difficult to navigate.

Platforms such as Figshare support persistent access, DOI assignment and the presentation of these materials as part of a coherent research record, ensuring that the full range of contribution is available for assessment.

Scholarly visibility: useful context, not a proxy for contribution

While CKU is fundamentally about intellectual contribution, the broader circulation of research can provide supplementary context. Where outputs are being cited in policy documents, taken up in professional practice, or discussed in specialist communities, those signals can help situate the reach of a body of work, particularly in applied or interdisciplinary fields where impact pathways are diverse.

Altmetric can surface where outputs are being referenced beyond traditional citation indexes, from policy and clinical guidelines to media and public discourse. These signals do not measure contribution to knowledge and understanding, and should not be presented as a substitute for bibliometric evidence or peer judgement. But as additional context, they can help round out the picture, particularly for outputs whose significance may not be fully reflected in citation metrics alone.

The important distinction is that scholarly visibility supports interpretation. It does not replace it.

From reactive selection to confident CKU readiness

CKU readiness is about planning, not last-minute correction. Institutions that approach it most effectively don’t wait until selection is imminent. They build the evidence base over time, ensuring completeness, contextualising performance, and constructing the thematic narrative that panels expect to see.

“What we often see is that institutions feel more confident in REF preparation when they’ve been building the picture gradually over time. It becomes easier to understand where strengths are emerging, how research sits within its field, and how to present that contribution coherently,” says Campbell.

REF readiness is about leading, not lagging. For CKU, that means investing in the evidence and infrastructure and contextual understanding that supports selection throughout the cycle.

Institutions preparing for REF29 are increasingly focusing on areas such as:

- ensuring completeness of publication records using interconnected data solutions such as Dimensions to validate coverage beyond institutional systems

- structuring output capture and governance through systems such as Symplectic Elements, linking people, publications and grants

- contextualising performance within disciplinary norms through field-normalised analysis in solutions such as Dimensions

- preserving diverse outputs and research artefacts with persistent identifiers, through repositories like Figshare

- drawing on supplementary context from tools such as Altmetric and Dimensions to situate reach and scholarly engagement

Together, these form the building blocks of a CKU submission that is traceable, representative, and defensible.

Digital Science supports this readiness through interconnected solutions that strengthen evidence and decision-making, while leaving judgement firmly with institutions and REF panels.

Whether you want to audit output visibility and identify gaps in your publication record, benchmark your CKU evidence within disciplinary context, or map the thematic strengths that will anchor your submission narrative, Digital Science can help.

The post REF readiness: evidencing Contribution to Knowledge & Understanding appeared first on Digital Science.

from Digital Science https://ift.tt/s7nJBkc

No comments:

Post a Comment